Background

The ale yeast pitching rate generally recommended by commercial brewers is one billion cells, per liter of wort, per degree Plato. Assuming a 25% loss in viability prior to re-pitching results in the rule of thumb of 0.75 billion/L-°P. However, yeast products designed to inoculate at this level are not available on the homebrew scale. Directly pitching a vial or pack of commercial yeast without a starter results in about half of the recommended pitching rate. The effects of under-pitching are varied, but generally held to be undesirable. Wyeast Laboratories, for example, states that under-pitching can cause “excess levels of diacetyl, [an] increase in higher/fusel alcohol formation, [an] increase in ester formation, [an] increase in volatile sulfur compounds, high terminal gravities, stuck fermentations, [and an] increased risk of infection”.

Among home brewers, however, the effects of under-pitching are less universally agreed upon, with some brewers maintaining that under-pitching has no impact, or at least no negative impact, on the final product. Many others pitch multiple yeast products, or make starters prior to brewing, in the belief that it will result in better beer. To test these assertions, a controlled experiment needed to be conducted.

Experimental Setup

In order to provide a reasonable baseline for comparison, I brewed six gallons of an American Amber Ale, targeting a BU:GU ratio of about 0.5. The idea was to come up with a middle-of-the-road representation of a style that would be accessible to most craft beer drinkers. After chilling, the wort was split into two plastic bucket fermenters, with one pitched at 0.73 billion cells/L-°P (“control”), and the other at 0.29 B/L-°P (“under-pitched”), which is roughly the pitching rate that would result from using a month-old smack pack in a five gallon batch. Since the cell counts used to calculate the pitching rates are based on slurry volume rather a true count, the associated error is high – I would suggest ±10%. More complete information on the beer brewed and the yeast propagation procedures can be found in the experiment proposal and yeast ranching posts, respectively.

Seventeen sample sets of three bottles each were distributed to home brewers from Oregon to Florida. One (presumably) broke in transit and was not delivered, and there was one non-respondent. The 15 effective sets resulted in feedback from 37 individual tasters. Twenty-three received “Set 1”, which contained two controls and one under-pitched sample, with the remaining fourteen having “Set 2”, with two under-pitched beers and one control. All sets were labeled only as “A”, “B”, and “C”, resulting in a blind triangle test. Aside from being recruited via a topic on a brewing forum, no effort was made to establish the participants’ credentials with regard to brewing or judging beer. I’d like to think they can therefore be considered a representative sample of the (online) home brewing community.

The chief difficulty associated with collecting impressions from such a widely divergent group of respondents is converting them into unambiguous numerical data. My first thought was to have each taster complete a standard BJCP Scoresheet, but there were several obvious problems with that method. First, filling out the scoresheet can be a daunting, time-consuming task, especially considering that most respondents were not BJCP judges and would probably be completing it for the first time. Second, since by design it addresses only a single beer, a minimum of three sheets per “judge” would be required. Finally, since it lacks room for additional, comparative questions, at least one additional sheet, for a total of four, would be required. In order to minimize the amount of paper and the time commitment required, I laid out a simple feedback form, loosely based on the BJCP scoresheet, which was distributed to all participants.

In order to obtain objective, internally consistent data, two different methods were used. First, the volunteers were asked to perform two simple, quantifiable tasks: identify the control and under-pitched beers; and express a preference for one or the other. In addition to providing these definitive, binary answers, each respondent was also able to share more detailed impressions about appearance, aroma, flavor, and mouthfeel. The descriptive adjectives used were then compiled by means of a simple frequency count. In order to simplify the dataset as much as possible, some descriptors were combined – first, variations on the same root word (“malt” and “malty”, e.g.), then words with substantially similar meanings (“hot” and “solventy”, e.g.).

Personal Observations

My personal tasting notes for the two beers should be taken with a grain of salt, since they don’t represent a blind tasting, but the photographs are so dramatic that I thought they should be included. The photos show, left and right, the under-pitched and control beers. The first photo was taken two minutes after the beers were poured; the second after they had been drunk over the course of about 35 minutes.

My personal tasting notes for the two beers should be taken with a grain of salt, since they don’t represent a blind tasting, but the photographs are so dramatic that I thought they should be included. The photos show, left and right, the under-pitched and control beers. The first photo was taken two minutes after the beers were poured; the second after they had been drunk over the course of about 35 minutes.

The under-pitched beer is slightly lighter in color (10.5-11 SRM instead of 12), and has minimal head retention or lacing when compared to the control beer. The aroma is malty with a slight hot alcohol character, whereas the control has a perfumy, floral hop aroma, with the malt more subdued. The under-pitched beer has a vaguely spicy or vegetal off-flavor, particularly in the aftertaste, and a slightly solventy finish. The control also has a peppery or spicy taste (which I would attribute to the use of Munich malt), but a lingering citrus flavor predominates. The mouthfeel of the under-pitched beer is thin and astringent compared to relative fullness of the control, although it’s difficult to make a fair comparison due to the difference in head retention. The control may have a very slightly more compact, “stickier” yeast sediment.

Results

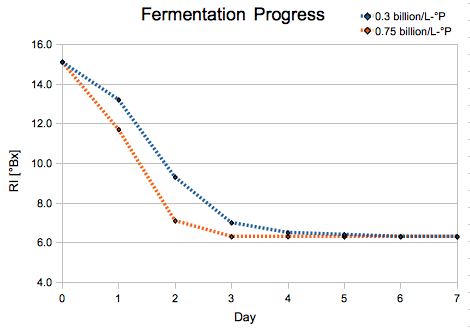

As a means of gauging fermentation performance, a refractometer reading was taken every twenty-four hours after pitching. The difference in the time required to start and finish fermentation is striking.

The control beer not only exhibited faster fermentation to begin with, but reached terminal gravity approximately twice as fast as the under-pitched fermenter. This provides a clear rationale for the use of higher pitching rates in commercial breweries, where fermenter time is extremely valuable. It could also point to a potential advantage for the higher pitching rate in allowing the yeast to out-compete any contaminating microbes.

Of the 30 tasters who attempted to differentiate the beers, thirteen were able to do so, with nine tasters correctly identifying the beers. This seems to support a hypothesis that there is a difference between the beers – if the three samples were truly indistinguishable, ten respondents could be expected to differentiate the beers, and five identify them.

Since the results follow a binomial distribution (a given sample is either identified or not, with no middle ground), the probability that these results are purely due to chance can be assessed. Given a random distribution, 13 of 30 respondents (or more) would be expected to differentiate the samples about 16.6% of the time. Based on these results, one can conclude, with 83% confidence, that the two beers do in fact taste different.

But what, specifically, are the differences between the beers? In addition to the more subjective descriptors I’ll get to in a bit, we can make a reasonable inference from the 13 tasters who correctly differentiated the samples. If the beers tasted different, but those differences revealed nothing about their identities, we would expect half of the 13 to guess correctly; in fact, the probability that nine or more would guess correctly is only 13.3%. This leads me to believe that at least some of the flavors generally associated with under-pitching – increased esters, fusel alcohols, diacetyl, acetaldehyde, etc. – are in fact present.

Twenty-four of the participants expressed a preference for one beer over the others, with the control being preferred sixteen to eight. When weighted appropriately for the number of samples, the control beer was preferred by 54.9% of tasters. Interestingly enough, this would seem to suggest that while the beers almost certainly are different, there is no consensus about which is better. Simply put, nearly half of people prefer under-pitched beers. An argument could be made, though, that only the opinions of the participants who actually differentiated the samples should be considered. And the data would seem to bear that out. Five of the differentiating tasters preferred the under-pitched beer; however, fully eight of the thirteen tasted Set 2, and when weighted accordingly they overwhelmingly prefer the control, 65.8% to 34.2%.

It is also worth noting that the first half of the respondents (those who tasted the beers after four weeks in the bottle or less) also preferred the control about two to one. So it’s entirely possible that additional conditioning time can reduce the off-flavors that result from under-pitching, but another controlled experiment, with the date of the tasting as a variable, would be needed to satisfactorily answer that question.

Finally, we come to the subjective tasting results. I think the above image largely speaks for itself, so I won’t elaborate too much further. The ten most common descriptors for the control beer are:

1: Malty

2: Hoppy

3: Bitter

4: Fruity

4: Sweet

6: Estery

6: Smooth

8: Thin

9: Solventy

9: Clean

9: Dry

9: Cidery

For the under-pitched beer, they are:

1: Bitter

2: Malty

3: Hoppy

4: Astringent

5: Fruity

5: Estery

5: Solventy

8: Clean

9: Smooth

9: Thin

Based on the relative frequencies of some words, I think one can reasonably conclude that the under-pitched beer was perceived to be more bitter, more astringent, more solventy, less sweet, and – bizarrely – cleaner than the beer using the standard rate. Obviously, the increased perception of negative characteristics makes a persuasive case for the use of higher pitching rates.

Summary

- No difference in attenuation was observed, but fermentation in the control finished twice as quickly.

- Under-pitching negatively impacts head retention and lacing.

- There is a 43% chance that an “average” home brewer will be able to distinguish between under-pitched and standard-pitched ales.

- There is a 30% chance that he will be able to identify which beer is which.

- Overall, home brewers exhibit no strong preference for either beer.

- Among tasters who can differentiate the two beers, the standard pitching rate is preferred nearly two to one.

- The control beer was described as malty, hoppy, bitter, fruity, and sweet.

- The under-pitched beer was described as being more bitter, more astringent, more solventy, less sweet, and cleaner than the control.

Summary of the Summary

Using a starter makes better beer.

Download the full dataset:

pitchrate_experiment.ods | pitchrate_experiment.xls

Totally awesome! I am convinced that using starters is the way to go. In fact, I might even start doing it for dry yeasts as well. You have changed my mind.

Excellent article, by the way. Well written. If you haven’t submitted this to Brew Your Own, I suggest that you should. In fact, I plan to email them now and suggest that they look this over and publish this.

Thanks for everything you did.

Thanks Jez. Be careful making starters with dry yeast though – if they aren’t HUGE starters (1.5 gal minimum), you can actually get more cell die-off than new growth. Rehydrating is a different story, and from my understanding is a very good idea.

I saw your post with the tasting results too. Make sure you let me know when you’re going to be in Indy.

Cool. Yeah, I’ll be back in Indy with the family June 22 & 23. Just a day stop earlier this week.

Wow. It sure did hit the fan over at the AHA website, huh? I think I’m on page 3.

Overall, after reading the comments on both sites (to this point), I think that what the results show to me is that there is a lot more variables than just the starter. I mean, it kind of gets overlooked, but even you stated on the NB forum that you were going for more of a yeast showcase (let me know if I’m misinformed). I wonder what the difference would be, what people’s opinions would be, say if you made this about 50 IBU and dry-hopped it? Would there be a way to tell the difference?

Again, I’ve been made a big believer in starters after this. But even DENNY picked the control. So my main takeaway, which I think I put in my blogpost, is that your average beerdrinker (and I don’t really consider myself much above average because my main philosophy is “If I like a beer, I like a beer and I’m happy”) probably couldn’t tell the difference between the two unless you told them something was different.

Again, thanks.

[…] (41) Popular PostsYeast Pitching Rate ResultsLOL @ MazurAeration and Yeast StartersBuild a Better StirplateYeast Ranching and YouRegulating […]

[…] from a similar test: http://seanterrill.com/2010/05/09/yeast-pitching-rate-results/ Again, both reached the same FG, but one took longer to do it and there were differences in the […]

[…] While brewing up the aforementioned wheat beer, I was slightly confused by pitching rates of yeast from different sources. While figuring out how much to use for my odd sized 4 gal batch, I noticed that standard packages of yeast generally have instructions for using one package for 5 gals. But as many of you know, the amount to use “recommended” by the brewing authorities out there is almost twice that of a standard package. My first question is, why do they tell you to use one package if its not optimal, Why not sell us more yeast in a bigger package? Secondly if I dont use it, what happens? Conveniently for me, one answer to this came from a recent podcast on Basic Brewing – see the May 13th episode – Stir plates and Triangle Tests. Or see the link to Sean’s website: Sean Terrel’s yeast pitching experiment […]

[…] recruiting dozens of home brewers to help evaluate. – Sean's Yeast Pitching Rate Results http://seanterrill.com/2010/05/09/yeast-pitching-rate-results/ – Sean's Stirplate Designhttp://seanterrill.com/2010/04/26/build-a-better-stirplate/ Click to […]

[…] is most of what a starter does, will definitely shorten fermentation. I did a split batch recently (link) in which the "starter" fermenter took 3 days to reach FG, and the "smack pack" […]

[…] a decent chance you'll find the beer better-tasting too: Yeast Pitching Rate Results __________________ http://seanterrill.com/category/brewing/ […]

Nice work

I liked the research. I’d just like to point out for the general public that an 83% confidence level is not considered statistically significant in a scientific setting, though this could just be due to the small sample size.

[…] factor in flavor development, though. I know I'm a broken record with this, but it's relevant: http://seanterrill.com/2010/05/09/yeast-pitching-rate-results/ __________________ http://seanterrill.com/category/brewing/ […]

[…] 2.5L starter was needed. I decided that overpitching a homebrew batch is less likely to occur than underpitching so I went with Mr. Malty’s numbers. Plus, I may pour off some of the starter after it’s […]

[…] […]

[…] Will there be off-flavors? If there are, what kind? What will be the kinetics of fermentation? This sort of experiment has been done before, but I really wanted to see the results first hand. Moreover, I will be blind tasting the results […]

[…] is used. There's a very interesting experiment between under pitched and proper pitched beers here. Some of the conclusions were- […]

[…] nonscientific study where the pitch rate is grossly exaggerated in the under pitched brew. http://seanterrill.com/2010/05/09/yeast-pitching-rate-results/ Despite all of that, it did not really support his argument. The under pitched was enjoyed nearly […]

[…] a beer 100.000% of the time. Actually there was several experiments, here are some of them: http://seanterrill.com/2010/05/09/yeast-pitching-rate-results/ http://sciencebrewer.com/2012/01/11/pitching-rate-experiment-results/ […]

[…] see exactly how it effects the finished flavor. It just so happens that this very thing has been done. […]

It’s good to see some actual experimentation being done on this subject, it’s hard to find any concrete data on this subject. Good job.

A couple points in your analysis were a little bit unsound though — first of all, an 83% confidence rating is not statistically significant. There’s almost a 1 in 5 chance that such a result was a false positive. Now, 83% confidence is better than no data, but it’s worth emphasising that this result is not a knock-down proof, and would be too weak to publish in a journal.

Second, there’s a flaw in your reasoning “An argument could be made, though, that only the opinions of the participants who actually differentiated the samples should be considered.” Because underpitching is widely considered to be a bad thing in the brewing community, you’d expect the tasters to be more critical of the beer they had identified as underpitched, even if they identified incorrectly. Even if you had measured a 50/50 success rate for identifying the underpitched beer, and if there was no difference in actual quality of the beers, you’d still expect to measure a decreased perceived quality for the underpitched beer if you only sampled those who guessed right (the “this is the beer that’s supposed to taste worse” effect). You can’t just exclude one of the arms of the trial after the fact to make your result more convincing.

I’d have liked to see an analysis of the significance of the differences between the descriptors, too; a couple of them look like they would be statistically significant, which is interesting — especially considering that “estery” does not look like it’s one of the statistically significant results, which contradicts the received wisdom on the negative effects of underpitching. Perhaps “estery” flavours are harder to discriminate, and this part of the experiment would be better performed on elite taste testers?

Anyway, I’m not trying to be hard on you, just giving some feedback on ways you could improve on this experiment in the future, if you’re so inclined.

[…] backing this up: http://www.wyeastlab.com/com-pitch-rates.cfm Home homebrewers experiment: http://seanterrill.com/2010/05/09/yeast-pitching-rate-results/ Having said all that based upon your description of some dramatically degraded yeast viability […]

[…] interesting case study experiment on underpitching and off-flavors that I came across: http://seanterrill.com/2010/05/09/yeast-pitching-rate-results/ googletag.display('div-gpt-ad-1364420449836-3'); __________________ Drinking: Double Hop EPA, […]

If I am making an IPA and using US Safeale05 right out of the packets should I pitch 2 packages knowing that about 50%

That should work, but why not just rehydrate?